The Five Barriers Between AI Capability and AI Deployment

A New Framework (PRIME) for predicting Enterprise AI Adoption in the Real-World

Anirudh Bajaj, 2025

In 1969, Chemical Bank installed America’s first ATM in Rockville Center, New York—a hulking machine that could dispense cash automatically, no human teller required. Bank executives looked at this gleaming robot and saw the future: branches staffed by machines, not people; customers getting their money without waiting in line; and most importantly, massive savings on labor costs as teller positions disappeared. The New York Times reported in 1973 that ATMs would eliminate “up to 75 percent” of teller jobs. It seemed obvious—why would banks employ humans to hand out cash when a machine could do it faster, cheaper, and twenty-four hours a day?

They were spectacularly wrong.

Between 1985 and 2002, as the number of ATMs in America exploded from 60,000 to 352,000—nearly six times as many—the number of bank tellers didn’t fall. It rose. From 485,000 tellers to 527,000 tellers. The robots designed specifically to replace these workers had spread across every bank in every city, and yet teller jobs grew slightly faster than the overall labor force. Even President Obama got it wrong decades later, citing ATMs in 2011 as a clear example of “technology displacing labor.”

Compare this to travel agents. In 2000, approximately 124,000 Americans worked as travel agents, booking flights and hotels for clients through established reservation systems. Then came Expedia, Travelocity, and a dozen other online booking sites that let customers do exactly what travel agents did—search flights, compare prices, book reservations—with just a few clicks. By 2014, travel agent employment had plummeted to 74,000, a forty percent decline. The technology could do the job, and nothing stopped it from doing so at scale.

Why did one job survive while the other vanished? Both faced automation that could perform their core tasks; both saw that automation deployed widely; both were told their jobs were doomed. The answer reveals everything about how to predict which jobs AI will actually displace versus which will merely transform—and that answer has nothing to do with how capable the AI is.

A Framework Emerges from AI Adoption Reality

After analyzing over 50 enterprise AI deployments across industries, a pattern became clear: AI adoption in the workplace is happening far slower than both market projections and technical capabilities would suggest. The conventional explanations—”change management challenges,” “cultural resistance,” “digital transformation maturity”—capture something real but offer little analytical precision. These concepts don’t help predict when AI will transform specific operations, nor do they help workers or organizations assess genuine risk and opportunity in their contexts.

Most AI job impact research focuses on exposure—measuring which tasks AI could theoretically perform. OpenAI’s influential “GPTs are GPTs” study found that 80 percent of the U.S. workforce could have at least 10 percent of their tasks affected by large language models, with 19 percent potentially seeing half their tasks impacted. GitHub’s Copilot research demonstrated significant productivity gains for software developers. These exposure studies are valuable—they show where AI could have impact—but they make a critical error: they assume technical capability predicts actual deployment.

The reality is different. Exposure measures technical potential; adoption depends on overcoming specific, identifiable barriers. An occupation might score high on AI exposure yet face glacial adoption due to regulatory frameworks, implementation costs, or error intolerance. Conversely, occupations with moderate exposure but low barriers might see rapid transformation.

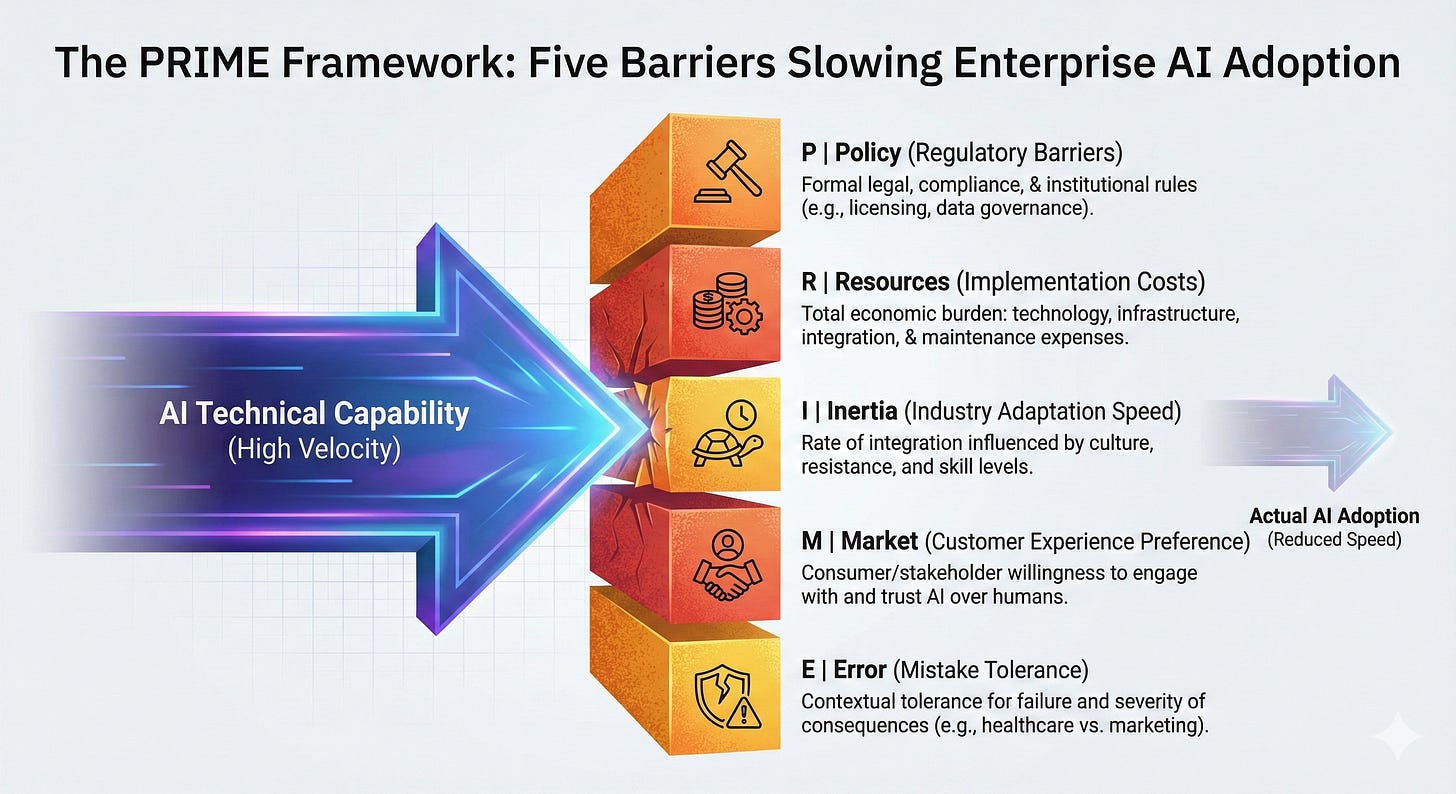

What emerged from deployment analysis is a structured framework identifying five distinct barriers that determine whether AI deployment happens rapidly, slowly, or not at all. These five barriers to enterprise AI adoption propose that job displacement operates not through simple technical substitution, but through identifiable mechanisms that either clear the path for transformation or create persistent friction that slows or prevents adoption altogether. The five barriers—Policy, Resources, Inertia, Market acceptance, and Error tolerance—provide a clearer way to predict how AI will actually reshape work in specific contexts.

This paper presents these barriers, grounds them in existing research on technology adoption and workforce transformation, and demonstrates their application across different occupations.

Theoretical Foundation

The framework builds on established technology adoption research. Rogers’ Diffusion of Innovations (1962) identified factors like relative advantage, compatibility, and complexity that influence how innovations spread through social systems. Davis’s Technology Acceptance Model (1989) showed that perceived usefulness and ease of use drive individual technology acceptance. Acemoglu and Restrepo’s task-based framework (2019) demonstrated that automation creates displacement effects counterbalanced by productivity gains and new task creation.

Recent empirical work validates the importance of deployment barriers. MIT CSAIL’s 2024 study found that only 23 percent of worker compensation exposed to AI computer vision would be cost-effective to automate due to large upfront costs. The World Economic Forum’s 2025 Future of Jobs Report found that 63 percent of employers identify skills gaps and organizational culture as primary barriers to transformation—up from 60 percent in 2023.

This framework addresses a critical gap: while existing research explains technology diffusion patterns, user acceptance, and post-adoption labor market effects, no framework systematically predicts when technically feasible AI will actually be deployed at enterprise scale. The five barriers presented here—Policy, Resources, Inertia, Market acceptance, and Error tolerance—operationalize the specific mechanisms that determine whether AI moves from demonstration to production deployment.

The Five Barriers to Enterprise AI Adoption

The framework identifies five categories of barriers—Policy, Resources, Inertia, Market acceptance, and Error tolerance (forming the acronym PRIME). Each operates independently, but their combined effect determines deployment velocity and ultimate impact on work:

Barrier 1: Policy (Regulatory Barriers)

Definition: Policy barriers comprise the formal legal, compliance, and institutional requirements that govern AI deployment in specific industries or contexts. These include licensing requirements, liability frameworks, data governance rules, sector-specific regulations, and the pace at which regulatory bodies adapt to technological change.

The first barrier concerns the regulatory environment surrounding specific types of work. Some industries face minimal regulation; others operate under complex legal frameworks that presume human decision-making and create substantial friction for AI deployment even when the technology is ready.

Autonomous trucking provides a clear example. The technology for self-driving trucks on highways has reached impressive maturity; several companies have demonstrated safe long-haul autonomous freight transport. But deployment faces a labyrinth of regulatory complexity: federal regulations governing commercial vehicles were written assuming human drivers; Commercial Driver’s License requirements and Department of Transportation oversight presume human operators; state-by-state regulatory variations create a patchwork where autonomous trucks might be legal in one state but prohibited in the next; many states explicitly require human drivers by law or are actively debating autonomous vehicle rules with no consensus.

This regulatory fragmentation prevents deployment at scale regardless of technical readiness. A freight company cannot operate autonomous trucks commercially when regulations haven’t caught up to technology, when liability frameworks remain unclear, and when the legal landscape varies dramatically across jurisdictions. Complete elimination of truck driver jobs probably requires decades, not years, because regulations need to evolve, infrastructure must be built, and society needs to become comfortable with 80,000-pound autonomous vehicles sharing highways with human-driven cars.

Barrier 2: Resources (Implementation Costs)

Definition: Resource barriers represent the total economic burden of deploying AI systems at production scale, including not only direct technology expenses but also required infrastructure investments, integration complexity, ongoing maintenance requirements, and organizational adaptation costs necessary to operationalize the system within existing workflows.

The second barrier concerns how expensive it is to actually deploy AI technology in real-world production environments—not in demonstrations or pilot programs, but at scale across operations with all their complexity and established infrastructure. Consider a scenario where a coffee shop in India could theoretically replace a human barista with a perfectly functioning humanoid robot capable of making coffee, handling orders, and managing transactions. On paper, the robot appears economically superior: it works continuously without rest, maintains consistent quality, and requires only electricity and internet connectivity after initial investment.

But here’s what the simple analysis misses: the coffee shop would need reliable high-speed internet to support the robot’s AI systems; climate-controlled environment with air conditioning to prevent hardware failures in India’s heat; potentially a dedicated server infrastructure with additional cooling; regular technical servicing and maintenance for sophisticated robotics; and substantial AI computing costs for every customer interaction. All of that infrastructure investment would far exceed the cost of hiring a human barista in a context where labor costs are low and the necessary supporting infrastructure doesn’t already exist. The same deployment might make economic sense in a different country where coffee shops already have robust climate control and connectivity, but implementation costs are context-dependent, varying by geography, industry, and existing infrastructure.

MIT CSAIL research in 2024 found that only 23 percent of worker compensation exposed to AI computer vision would be cost-effective for firms to automate, with large upfront costs of AI systems making most tasks economically unattractive to automate. This empirical finding validates what deployment analysis reveals: technical capability and economic viability often diverge significantly.

Barrier 3: Inertia (Industry Adaptation Speed)

Definition: Inertia describes the rate at which specific sectors integrate new technologies into standard practice, influenced by organizational culture, competitive dynamics, institutional resistance, workforce skill levels, and the consequences of rapid versus cautious adoption. This barrier determines how quickly technically feasible and economically viable AI solutions actually diffuse through an industry.

The third barrier addresses how quickly specific industries actually integrate new technologies once other barriers are overcome. Some industries move with remarkable speed; others are structurally conservative and slow to change regardless of technology readiness.

K-12 education exemplifies high inertia. The sector faces teacher resistance to new tools, inadequate training infrastructure, severe budget constraints, and institutional conservatism rooted in the high stakes of educating children. Technology advances faster than classroom adoption; tools that could improve learning sit unused because implementation requires changing how thousands of teachers work, retraining entire workforces, and convincing conservative school boards to invest in systems that challenge traditional pedagogy. Education is not unique in this—healthcare, government, and legal services all show similar patterns of slow, cautious integration.

Contrast this with the technology industry itself, which adopts innovations almost instantly. Tech companies value speed, embrace experimentation, accept rapid change as normal, and face intense competitive pressure to integrate productivity-enhancing tools before competitors do. The “move fast” culture means AI coding assistants went from novel experiment to industry standard in roughly eighteen months. Change management and cultural resistance—the fuzzy concepts that often explain slow adoption—fit squarely into this barrier: they describe industries where adaptation is structurally slow regardless of other factors.

Barrier 4: Market (Customer Experience Preference)

Definition: Market barriers encompass both consumer willingness to engage with AI-mediated services and stakeholder comfort with AI systems replacing or augmenting human judgment in specific contexts. This barrier operates through trust dynamics, preference for human interaction, and perceived value of human expertise beyond pure task performance.

The fourth barrier addresses whether customers, clients, or other stakeholders actually want to interact with AI systems instead of humans, even when the AI performs the task competently. This operates at multiple levels: end consumers may prefer human service for relationship-intensive work; business clients may hesitate to trust AI recommendations for high-stakes decisions; and employees themselves may resist systems that change how they work.

Consider mental health therapy. AI chatbots and therapeutic support systems have demonstrated clinical effectiveness for certain types of therapeutic interventions, particularly for cognitive behavioral therapy and anxiety management. The technology works; studies show positive outcomes. But market acceptance remains mixed at best—many people seeking mental health support specifically want human empathy, the feeling of being understood by another person, and the trust that comes from human-to-human connection. The technology’s capability isn’t the constraint; willingness to use it is.

This barrier operates differently in business-to-business contexts. Research on robo-advisors found that older adults showed very limited uptake because they were less likely to trust the technology, and greater awareness of automation correlated with reduced organizational commitment and higher turnover intentions among employees. Market acceptance isn’t monolithic—it varies by demographic, context, and the specific human needs being addressed.

Barrier 5: Error (Mistake Tolerance)

Definition: Error acceptance describes the tolerance for mistakes within a specific operational context, determined by the severity of consequences from system failures, liability frameworks governing responsibility for AI errors, and the reversibility of decisions made by or with AI assistance. Low error acceptance creates high barriers to adoption regardless of average system accuracy.

The third barrier examines how much tolerance exists for AI mistakes in specific contexts. Some domains accept errors as part of normal operation; others cannot tolerate even rare failures. This fundamentally shapes deployment timelines regardless of AI capability.

Healthcare illustrates low error tolerance. An AI diagnostic system might achieve 98 percent accuracy—substantially better than human diagnostic error rates in many contexts—but that 2 percent failure rate carries catastrophic consequences. You cannot tell a patient’s family “the AI is right ninety-eight percent of the time” when their loved one died because of the two percent error. Medical malpractice liability, ethical obligations, and the fundamental reality that healthcare involves life-and-death stakes all create extremely low error tolerance that slows AI adoption regardless of capability. The system must approach near-perfect performance, undergo extensive clinical validation, and operate under careful human oversight before wide deployment becomes acceptable.

Compare this to content creation, marketing, or business communication, where errors are inconvenient but rarely catastrophic. If AI writes marketing copy and makes a mistake, you fix it and republish; the worst case is minor embarrassment and correction effort. This high error tolerance explains why AI writing tools have seen explosive adoption in marketing and communications, while AI diagnostic tools in healthcare face years of additional validation despite often exceeding human performance.

Note: The irony here is profound: AI might actually be most beneficial in high-stakes fields precisely because humans make so many errors. Ninety-four percent of road crashes are tied to human error; medical diagnostic errors affect millions of patients annually. AI could save lives by reducing these errors—but low error tolerance in these fields often means deployment is slower where AI could help most, and faster where errors matter least.

How Barriers Compound

These barriers don’t operate independently; they compound. When multiple barriers are high, AI adoption slows dramatically even when technical capability exists. When multiple barriers are low, adoption happens explosively fast regardless of remaining challenges.

Return to the opening examples. ATMs faced four out of five significant barriers: resource costs were substantial—banks had to invest heavily in machines, build secure infrastructure, and maintain networks across locations; market acceptance was mixed—customers wanted convenience but also valued human service for complex banking; error tolerance was low—malfunctions or security breaches damaged customer trust and created liability; and banking faced high inertia, moving cautiously with new technology. Only policy barriers were moderate. Four major barriers slowed adoption and shaped how ATMs were deployed, ultimately leading them to complement tellers rather than replace them.

Travel agents faced almost no barriers: resource costs were minimal—customers just needed internet access they already had; market acceptance was high—people preferred the convenience and control of booking themselves; error tolerance was high—booking mistakes were annoying but easily fixed; virtually no policy regulations governed travel booking; and the travel industry showed low inertia, adapting quickly because competitive pressure from early online adopters forced others to follow. Zero meaningful barriers meant the technology could do the work, and nothing prevented it from doing so at scale. Travel agent jobs collapsed.

This same analysis applies to any occupation facing AI exposure. The question isn’t whether AI can do the job—it probably can do substantial portions of work already, or will be able to soon.

The question is: how many barriers stand between AI’s capability and actual deployment at scale in specific contexts?

Software developers face high AI exposure but surprisingly few barriers: resource costs are trivial—twenty dollars per month for AI coding tools; market acceptance is high because technology companies care about productivity more than whether humans or AI wrote the code; error tolerance is relatively high because bugs are expected and testing catches most problems; no policy regulations govern who can write code or require human programmers; and the tech industry shows minimal inertia, adopting new tools instantly. With only one significant barrier—the need for human oversight to catch AI mistakes and make architectural decisions—AI has spread rapidly through software development, but the work has transformed rather than disappeared because that remaining barrier is fundamental to how software gets built.

Healthcare practitioners face high AI exposure but every single barrier is substantial: resource costs are high—medical AI systems require expensive integration with hospital infrastructure and extensive validation; market acceptance is mixed—patients want human doctors even when AI might be more accurate; error tolerance is extremely low—medical mistakes kill people; policy regulations require physician oversight and impose liability on human doctors for AI recommendations; and healthcare demonstrates extreme inertia, among the slowest-adopting industries due to institutional conservatism. Five out of five barriers create a context where AI deployment happens gradually over decades, not years, despite impressive technical capabilities.

Conclusion

The ATM paradox—technology that could replace workers but instead transformed their roles—will repeat across countless occupations as AI capabilities expand. But the outcomes won’t be uniform, and they won’t follow simple predictions based on technical capability alone. Understanding the five barriers to enterprise AI adoption suggests that AI’s impact on work will be determined not primarily by what AI can do, but by the specific combination of Policy, Resources, Inertia, Market, and Error factors that either enable or prevent deployment in particular contexts.

We stand at a moment where AI technical capabilities are advancing faster than our frameworks for understanding their real-world implications. The urgent question isn’t whether AI will transform work—it will—but rather how to predict, prepare for, and shape those transformations in ways that benefit both organizations and workers. The PRIME Framework offers one approach to moving beyond speculation toward more rigorous, context-specific analysis of AI’s actual impact on the future of work.

Appendix

Methodology Note

This framework emerged from analysis of several enterprise AI deployment projects spanning healthcare, financial services, technology, manufacturing, education, and professional services sectors. The analysis drew on my experience working with HR executives navigating AI -related workforce transformation, and building AI-powered skills systems at Microsoft (People Skills) for AI-enabled skills discovery and workforce development. I examined implementation timelines, adoption patterns, organizational barriers, and ultimate outcomes to identify recurring factors that accelerated or impeded deployment regardless of technical capability.

The framework synthesizes my empirical observations with established research on technology adoption (Rogers, Davis), organizational change theory, and workforce transformation studies from the World Economic Forum, McKinsey Global Institute, and MIT Digital Economy research. The five barrier dimensions were refined through application to diverse occupational contexts and validation against observed adoption patterns in industries ranging from software development to healthcare to transportation.

Academic and Industry Application

This framework provides a structured approach for researchers, policymakers, and organizations to assess AI adoption likelihood in specific contexts. Rather than relying on generalized predictions about AI’s impact on work, it enables context-specific analysis that accounts for economic, social, regulatory, and organizational factors shaping actual deployment.

For researchers, the framework offers testable propositions about adoption velocity across different barrier configurations. Future work could quantify barrier strength across industries, develop predictive models for adoption timelines, and examine how interventions targeting specific barriers accelerate or enable deployment.

For academic or industry reference, please cite as: Bajaj, A. (2025). The PRIME Framework: The 5 barriers To Enterprise AI adoption

For policymakers, understanding these barriers enables more effective interventions. Infrastructure investment (Resources), regulatory modernization (Policy), workforce development programs (Inertia), and innovation support can target the specific constraints preventing beneficial AI adoption in priority sectors.

For organizations, the framework provides a diagnostic tool for assessing AI deployment feasibility. Rather than pursuing AI initiatives based solely on technical capability, leaders can systematically evaluate whether their specific context presents insurmountable barriers or clear paths to successful implementation.

Implications for U.S. Workforce Competitiveness

Understanding these adoption barriers carries significant implications for national workforce strategy and economic competitiveness, enabling more precise policy interventions than broad assumptions about AI’s inevitable displacement of workers.

First, skills gap analysis must account for barrier dynamics rather than treating AI exposure scores as displacement predictions. High-exposure occupations facing multiple barriers require different workforce development strategies than high-exposure occupations with few barriers. Investment in retraining should prioritize occupations where barriers are low and transformation is imminent, rather than distributing resources evenly across all AI-exposed work.

Second, productivity gains from AI will concentrate in sectors with low barriers, potentially widening economic inequality between industries and regions. Areas where resource costs are high—often rural or economically disadvantaged regions lacking robust infrastructure—may see delayed AI benefits even when the technology could improve outcomes. Policy interventions addressing infrastructure gaps could accelerate beneficial AI adoption while reducing geographic inequality.

Third, policy adaptation speed directly affects competitive positioning. Industries where the United States maintains regulatory clarity and frameworks that enable responsible AI deployment while managing risks will attract investment and talent. Jurisdictions that update policy thoughtfully to accommodate AI capabilities while maintaining appropriate safeguards gain economic advantage over those paralyzed by regulatory uncertainty.

Fourth, workforce mobility and career transitions depend on understanding which skills face genuine displacement risk versus transformation. Workers in high-barrier occupations may have longer time horizons for adaptation but should still develop AI literacy; workers in low-barrier occupations face more urgent pressure but also more immediate opportunities to leverage AI for productivity gains. Educational institutions and workforce development programs need barrier-informed guidance to prepare workers effectively.

Finally, national AI strategy should recognize that enabling beneficial adoption requires addressing all five barriers systematically, not just advancing technical capabilities.

Relationship to Existing Research

This framework complements rather than contradicts established technology and labor economics research, but addresses a distinct analytical question.

Rogers (1962) and Davis (1989) effectively predict technology diffusion patterns and individual acceptance based on perceived attributes like relative advantage, compatibility, and ease of use. However, both frameworks were developed before AI’s unique characteristics emerged and focus primarily on adoption decisions rather than deployment economics. Rogers documents how innovations spread through social systems but doesn’t systematize the economic and organizational barriers that prevent deployment even when individuals perceive an innovation positively. TAM predicts individual behavioral intentions but doesn’t address enterprise-level implementation costs, regulatory constraints, or error tolerance requirements.

Acemoglu & Restrepo (2019) provide the definitive framework for understanding what happens after automation is deployed: displacement effects that reduce labor demand, counterbalanced by productivity gains and the creation of new labor-intensive tasks that “reinstate” workers. Their research shows that approximately half of employment growth from 1980-2015 occurred in occupations where job titles or tasks fundamentally changed. This is critical for understanding long-term labor market dynamics—but it analyzes post-adoption transformation, not pre-adoption deployment barriers. Their framework explains why jobs transform rather than disappear; this framework explains why some jobs face rapid AI deployment while others see glacial adoption despite similar technical feasibility.

World Economic Forum reports document employer perceptions, intentions, and identified obstacles across industries. The 2025 report’s finding that 63 percent of employers cite skills gaps as a primary barrier (up from 60 percent in 2023) provides valuable empirical validation of the Inertia barrier. However, WEF reports describe what employers experience without systematizing the underlying mechanisms or providing a predictive framework for assessing deployment likelihood in specific contexts.

MIT CSAIL’s 2024 economic viability study directly validates the Resource barrier by demonstrating that only 23 percent of AI-exposed work is cost-effective to automate despite technical capability. This empirical finding confirms that technical feasibility diverges dramatically from economic deployment—precisely the gap this framework addresses.

What this framework adds: A systematic, predictive model for assessing when and why technically feasible AI will actually be deployed at scale. While existing research explains individual acceptance (TAM), diffusion patterns (Rogers), post-adoption labor effects (Acemoglu & Restrepo), and employer perceptions (WEF), no framework operationalizes the five specific barriers—Policy, Resources, Inertia, Market, Error—that determine deployment velocity. This framework enables context-specific predictions: a healthcare AI system might score high on technical capability but face all five barriers at high levels, predicting decades-long gradual adoption; a software development AI tool might face only one barrier (human oversight for architecture decisions), predicting rapid transformation.

The framework deliberately focuses on barriers rather than enablers because deployment analysis requires understanding what prevents adoption despite technical readiness. This asymmetry—where the presence of even one severe barrier can block deployment regardless of how many factors favor adoption—makes barrier analysis more predictive than factor-based models for enterprise AI deployment.

For academic or industry reference, please cite as: Bajaj, A. (2025). The PRIME Framework: The 5 barriers To Enterprise AI adoption

This paper is part of a broader research program examining AI’s impact on work and careers, presented in the forthcoming book “Indispensable”