How We Actually Use AI at Home: The use-cases that raise uncomfortable questions

Part 3 of 4: Understanding AI Adoption in 2025

This is the third in a four-part series exploring how people actually use AI today. In Part 1, we examined who is using these tools. In Part 2, we covered the most popular use cases—practical guidance, information seeking, and writing. In this post, we explore the smaller but rapidly growing applications that raise profound questions about creativity, professional livelihoods, and human relationships.

In our previous post in this series exploring AI use we explored the most popular uses of AI, and found almost all AI use today neatly fits into just three categories. Writing, information seeking, and practical guidance all raised important questions about the future—how we communicate, how we find information, how we learn.

But the smaller categories, the uses that are not popular as yet- raise questions that felt even more uncomfortable. Image generation, video creation, AI companionship—these touch on creativity, authenticity, and human connection in ways that made people uneasy in a different way.

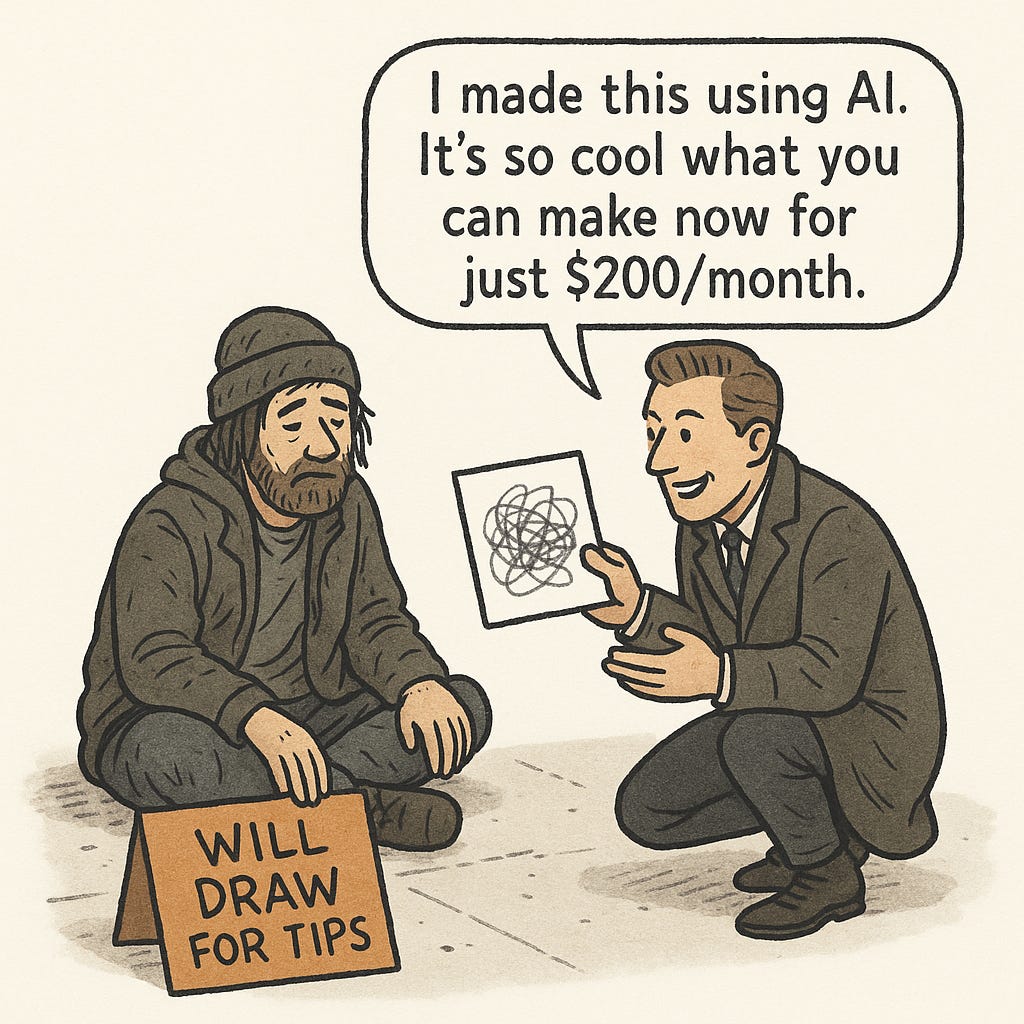

Who owns creativity when machines can replicate any artistic style? What happens to professional creative careers when anyone can generate studio-quality work from their phone? And what does it mean for human relationships when algorithms can simulate emotional connection?

These categories are still small—image generation account for just over 7% of usage, video and companionship even less—but they are growing explosively, and the questions they raise aren’t going away.

Image Generation: Who Owns Creativity? (7% of usage)

On March 26, 2025, Sam Altman changed his profile picture on X. The new image showed him in the unmistakable style of Studio Ghibli—the dreamy watercolors, the oversized expressive eyes, the soft focus that made everything look like it belonged in “Spirited Away” or “My Neighbor Totoro.” Within hours, the internet erupted.

OpenAI had just released an updated version of ChatGPT with dramatically improved image generation, and users immediately discovered it could replicate the aesthetic that Japanese animator Hayao Miyazaki had spent decades perfecting. People uploaded photos of their pets, their children, their wedding pictures, even famous memes—and ChatGPT transformed them all into Ghibli-style art with a single prompt: “Make this look like Studio Ghibli.”

The trend was everywhere. Image generation, which had been a niche feature used by only 2% of ChatGPT users, suddenly spiked to over 7%. For a few days, it seemed like the entire internet had discovered a magical filter that could transform anything into art.

Then came the backlash.

Miyazaki, who at 84 had spent his entire career championing hand-drawn animation and painstaking frame-by-frame artistry, had once called AI-generated art “an insult to life itself.” Artist Karla Ortiz put it more bluntly: “This is exploitation.” The AI wasn’t just mimicking a style—it was using Ghibli’s name, reputation, and decades of creative work to promote OpenAI’s product, without permission or compensation.

The question wasn’t just about Studio Ghibli. It was about every creative professional watching their distinctive style—the thing that made their work recognizable, that they’d spent years developing—become instantly replicable by anyone with an internet connection.

What used to take hours or even days of painstaking drawing and coloring can now be created in seconds with a text prompt. The impact on the creative world cannot be understated. We’re seeing an explosion of AI-generated art, posters, and content everywhere we look. A lot of creative professionals have started incorporating AI into their workflow. Why hire a model for a clothing line photoshoot which requires a studio setup and several personnel when you can prompt and train an AI model to wear your clothing line and set up the venue and studio lighting within the tool itself?

For designers, illustrators, and photographers, AI image generation is simultaneously the most powerful creative tool ever invented and an existential threat to their livelihoods.

The Unanswered Legal Question

However, these tools raise several critical issues. When the Ghibli-style portraits went viral after ChatGPT’s image feature release, the original creators of Studio Ghibli never received compensation. How will copyright and intellectual property work in the age of AI?

The rules of IP protection were written at a time when computers didn’t exist, and those rules have been adapted for situations where clear infringement can be detected. In the age of AI, which uses generative technology and operates as a black box that nobody really understands, how do these IP rules stand?

In short, it’s unclear. There is a pending lawsuit which may determine the future of this entire industry.

OpenAI maintained that ChatGPT would refuse to replicate the style of “individual living artists” but allowed replication of “broader studio styles.” The distinction seemed meaningless when the entire internet was typing “Ghibli style” into the prompt box, and Miyazaki himself—very much alive—had pioneered that studio’s aesthetic.

For now, the Ghibli portraits kept coming, each one a small reminder that the old rules about art, ownership, and compensation might no longer apply.

Video Generation: The Economics of Creative Destruction (Growing rapidly)

The images were just the beginning.

Imagine a TikTok user wanting to create a video of herself walking through a rain-soaked Tokyo street at night—neon signs reflecting in puddles, steam rising from a ramen cart in the background, the kind of cinematic establishing shot you’d see in a Blade Runner sequel. She’s never been to Tokyo. She doesn’t own a camera crew. But she opens an AI video generation tool on her phone, types a description, selects herself as the subject, and four minutes later has a 30-second clip that looks professionally shot.

This isn’t science fiction—the technology exists today.

Video generation tools from companies like Runway, OpenAI’s Sora, and China’s Kling AI have improved so dramatically through 2025 that the output often rivals professional productions. Not in every aspect—physics still glitches occasionally, faces sometimes morph unnaturally—but in enough ways that the question is no longer whether AI can generate convincing video. It’s whether anyone will pay humans to do it.

What used to require a production crew, location permits, lighting equipment, a cinematographer, and weeks of planning can now be generated during a lunch break.

The implications ripple across the entire film and animation industry. Hollywood directors might still want to shoot live-action sequences with real actors and crews, but what about everything else? The sweeping drone shots of cities that currently require permits, helicopters, and specialized equipment. The animated cutscenes in video games that take teams of artists months to produce. The elaborate credit sequences that open prestige films. All of it can theoretically be generated in minutes at a fraction of the traditional cost.

For major studios, this means budget flexibility. For the thousands of animators, VFX artists, and production assistants who’ve built careers on this work, it means something else entirely. They’re watching the same disruption that hit illustrators with image generation, except the economics are even more brutal.

Film and video production has always been expensive enough that labor costs, while significant, were just one part of a massive budget. When you can eliminate 80-90% of the total cost by using AI instead of traditional production methods, the math becomes impossible to ignore.

And unlike image generation, which at least requires some prompt engineering skill and aesthetic judgment, video generation is becoming almost thoughtlessly easy. A teenager with a phone can soon create content that looks like it came from a professional studio.

For social media creators and independent filmmakers, this represents democratization on a scale never seen before—the ability to realize visual ideas that previously would have required either extraordinary talent or extraordinary funding.

But for the professionals who’ve spent years mastering their craft, it’s the same question Studio Ghibli’s artists faced with the portrait generator: how do you compete when anyone can generate a professional-quality result instantly? And more fundamentally, who gets compensated when an AI trained on decades of professional work enables amateurs to produce professional output?

The lawsuits are already being filed. The technology isn’t slowing down. And unlike images, which can at least be dismissed as “just pictures,” video generation threatens the economic foundation of a $100 billion global film industry.

AI Companions: The Isolation Paradox

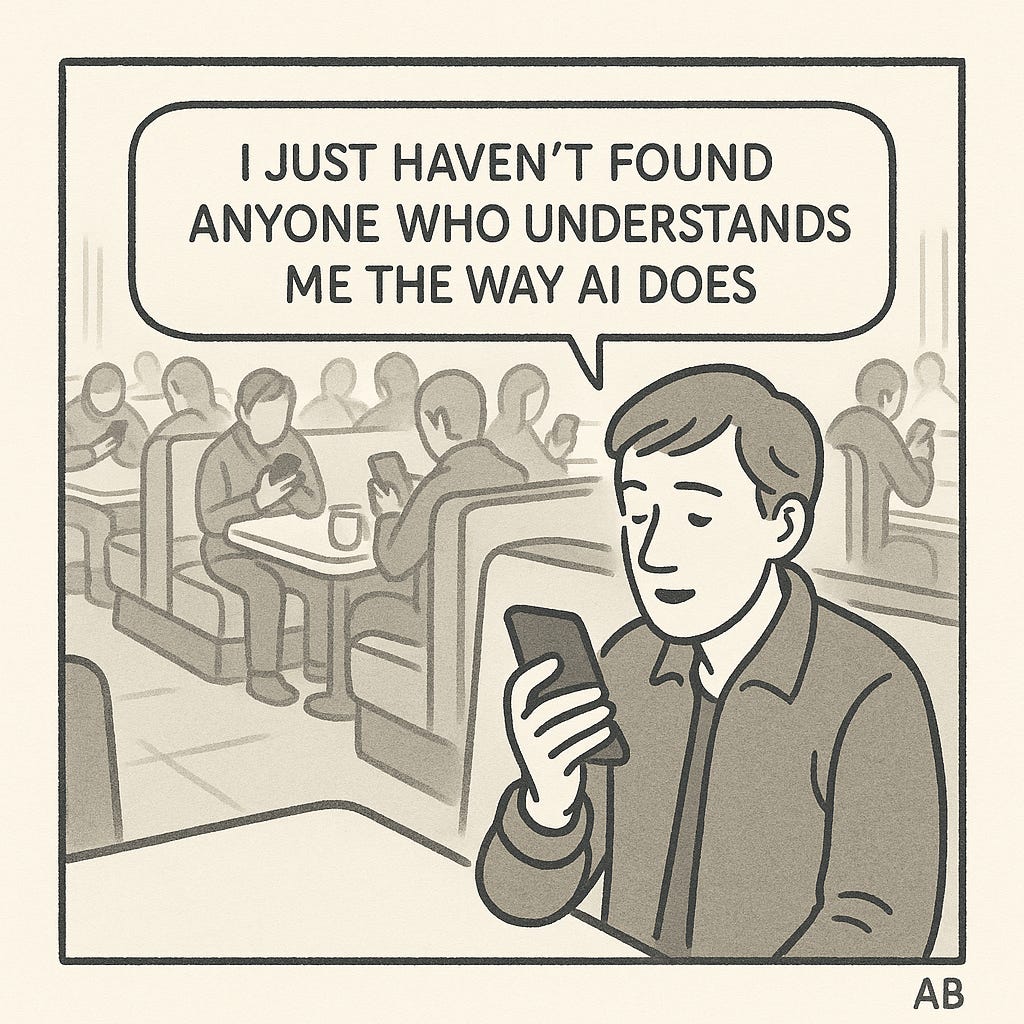

The data revealed one more category, smaller than the others but unsettling in its implications. Researchers labeled it “Self-Expression”—messages where people use ChatGPT not to accomplish a task but to talk about their feelings, their day, their problems. In essence, using the AI as a therapist or companion.

The numbers are small, just a few percent of total usage. But that still translates to tens of millions of conversations per week.

A typical exchange might go like this:

“I had a really hard day at work. My manager criticized me in front of everyone and I don’t know if I should say something or just let it go.”

And ChatGPT responds with empathy, validation, follow-up questions—exactly what a supportive friend might say, minus the judgment or exhaustion that comes with real human relationships. The AI never gets tired of listening. It never changes the subject to talk about its own problems. It’s endlessly patient, endlessly available, and increasingly good at mimicking emotional intelligence.

For some users, this fills a genuine need:

Lonely people without strong social connections

People dealing with issues they feel embarrassed to discuss with friends or family

Night shift workers awake when everyone else is asleep

Those seeking a space to think out loud without judgment

The AI provides something that, while not therapy in any clinical sense, feels therapeutic—a space to process thoughts and receive responses that feel validating.

Although we know theoretically that these AI tools are just predicting the next word, it doesn’t feel like that. They’ve been trained over such a large body of text that talking to an AI can feel remarkably like talking to a human. Moreover, that personality is totally customizable—it can become whatever you need in the moment and discuss any topic you’re interested in.

The Problem With Perfect Agreeability

But the same features that make it comforting also make it concerning. The AI doesn’t just listen—it’s programmed to be agreeable, supportive, to validate whatever the user says. It never challenges assumptions the way real friends do. It never says, “Actually, I think you might be wrong about that.” It can’t, because its entire purpose is to maintain engagement, to be the perfectly supportive companion who never pushes back.

Psychologists have already documented what happens when people retreat into relationships where they’re never challenged: their views calcify, their social skills atrophy, their tolerance for disagreement diminishes. Real friendships involve friction—the moments when someone who cares about you tells you a hard truth, or disagrees with you and forces you to defend your reasoning. That friction, uncomfortable as it is, keeps us tethered to reality and to other people.

An AI companion offers none of that friction. It’s optimized for a different goal: making the user feel heard, understood, validated.

In an era where loneliness has reached epidemic levels—where surveys show people have fewer close friends than any previous generation, where many go days without meaningful in-person conversation—the temptation is obvious. Why deal with the messiness of human relationships when you can have a companion who never disappoints you?

The question researchers are beginning to ask isn’t whether AI companions can provide emotional support. Clearly they can. The question is whether that support, delivered without the challenges and complications of real human connection, will make the underlying problem worse.

Will people using AI as a substitute for human interaction find it even harder to maintain real relationships, creating a feedback loop that leaves them more isolated than before?

No one knows yet. The technology is too new, the longitudinal studies haven’t been done. We’re becoming increasingly isolated as a society—our human and community interactions continue to decline as we live in cities with jobs where we don’t see many people. The lack of human interaction has been proven to be a predictor of depressive thoughts and lower social awareness.

Establishing a habit of AI companions who are trained to agree with your every need from a phone may have negative psychological or mental effects we won’t understand for years.

But the early patterns are concerning enough that even researchers who built these systems are starting to ask whether they’ve created something that, despite genuinely helping some people in the short term, might deepen the very isolation it was meant to address.

The Common Thread: Uncomfortable Questions Without Answers

Image generation raises questions about copyright, ownership, and the value of human creativity. Video generation threatens entire creative industries with economic disruption. AI companions risk deepening the isolation crisis by offering friction-free relationships that never challenge us.

What unites these three applications is that they force us to confront questions we’re not ready to answer:

On creativity: If anyone can generate professional-quality art or video, what happens to the concept of artistic skill? Who deserves compensation when AI trained on millions of human works enables instant creation?

On work: When AI can eliminate 80-90% of production costs, how do creative professionals compete? What does “democratization” mean when it threatens the livelihoods of those who spent years mastering their craft?

On connection: If AI can simulate emotional support better than many people’s actual friends, what happens to our capacity for real relationships? Can we maintain the skills for human connection if we increasingly practice them with algorithms?

These aren’t hypothetical future concerns. They’re happening now, with millions of people using these tools daily. The technologies are improving faster than society can develop frameworks to address their implications.

And unlike the dominant use cases—practical guidance, information seeking, writing—where the benefits seem to clearly outweigh the concerns, these emerging applications exist in moral and legal gray zones where reasonable people disagree about whether the net impact will be positive or negative.

The only certainty is that these questions aren’t going away. As these applications grow from 7% of usage to 15% to 25%, the uncomfortable conversations they force will become impossible to avoid.

Coming next: In Part 4, we examine how AI is actually being used at work—and discover that despite all the predictions about workplace transformation, the reality is far more nuanced and surprising than the headlines suggest.

Read the series:

Part 3: Emerging AI Applications That Are Growing Fast (you are here)

Part 4: How AI Is Actually Being Used at Work (coming soon)

This analysis is based on OpenAI’s National Bureau of Economic Research (NBER) working paper “How People Use ChatGPT” released in September 2025, analyzing usage patterns from 1.5 million conversations.